Why TikTok’s Detection System Matters More Than the Platform Rules

TikTok publishes community guidelines that prohibit artificial inflation of metrics. But the guidelines are not what stops low-quality services from working — the detection system is. The algorithm evaluates engagement quality in real time, and accounts that receive engagement that fails its behavioral audit see their content distribution reduced automatically, before any human review takes place.

This means the question is not whether TikTok has rules against fake engagement. The question is: what signals does the detection system actually measure, and at what thresholds does it act? Understanding those mechanics is what separates a well-informed growth strategy from one that creates suppression risk.

The Four Core Detection Signals TikTok’s Algorithm Audits

1. Velocity Patterns: How Fast Engagement Arrives

The FYP distribution model generates organic engagement in waves — a video gains views gradually as it is tested across wider audience segments. Sudden engagement spikes that do not match any corresponding distribution event are the primary flag the system uses to identify inauthentic activity.

A video that receives 500 followers in 40 minutes with no corresponding spike in video views or profile visits has created a velocity pattern the algorithm has no organic explanation for. The system does not need certainty — it only needs statistical anomaly to initiate a suppression response.

2. Session Quality: What Engaged Accounts Actually Do

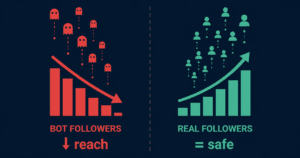

TikTok tracks what users do after engaging with a video or following an account. Valid engagement comes from accounts with consistent session behavior: watch history, interaction patterns, and time-on-app data that match normal user behavior. Accounts that follow a profile and then show zero subsequent activity — no video views, no interaction, no session data — are classified as low-quality signals within the engagement graph.

This is one reason follower count alone is a weak growth metric. The algorithm weighs the quality of engagement events, not just their volume. Ten followers from high-session-quality accounts have more FYP distribution impact than 200 followers from accounts with no behavioral history.

3. Device and IP Diversity: The Fingerprint Problem

Inauthentic engagement operations typically run from limited device pools or shared IP infrastructure. TikTok’s system flags engagement clusters that originate from the same device identifiers, the same IP ranges, or the same network segments. When a high percentage of engagement events on a single video trace back to a narrow device or IP cohort, the system treats the cluster as coordinated inauthentic behavior.

Quality delivery services use real account networks distributed across genuine device environments — not emulators or shared infrastructure. Device diversity is not just about avoiding detection; it is what determines whether follower or view signals register as valid within the algorithm’s engagement graph.

4. Engagement Ratio Consistency: The Account-Level Audit

TikTok monitors the relationship between follower count, video view rate, like rate, and comment rate at the account level. An account with 50,000 followers whose videos consistently receive 200 views has a follower-to-engagement ratio that sits far outside the statistical norm for its size category. The algorithm uses this ratio as a baseline health signal — and accounts with persistent ratio anomalies face reduced distribution even without triggering the velocity or device flags.

This is the downstream risk of low-quality follower services: the followers themselves may not trigger immediate suppression, but the ratio distortion they create causes a long-term drag on organic reach. For a detailed analysis of how engagement rate changes after follower purchases, see TikTok engagement rate after buying followers.

Content Suppression vs. Full Account Restriction: What Each Looks Like

Level 1 — Content-Level Distribution Suppression

The most common outcome of triggering a detection flag is not account suspension — it is content suppression. The affected video stops receiving FYP distribution. It remains visible on the profile but is removed from the recommendation queue. The account itself continues to function normally. Most users experience this as “the video just stopped getting views” with no error message or platform notification.

Content-level suppression is temporary if the triggering event was a single anomaly. The system re-evaluates distribution eligibility as new content is posted and engagement patterns normalize.

Level 2 — Account-Level Reach Suppression

When anomalous engagement patterns repeat across multiple videos or over an extended period, the suppression escalates to the account level. The account’s new content receives limited distribution even when it performs well on watch time and interaction signals. This is commonly referred to as a shadowban, though TikTok does not use that term officially. For a complete breakdown of how to diagnose and recover from this state, see TikTok shadowban 2026: how to diagnose, fix, and prevent algorithm suppression.

Level 3 — Content Removal and Account Action

Full removal or account termination is reserved for systematic, large-scale inauthentic behavior — typically detected through coordinated campaigns that affect multiple accounts simultaneously. Individual users using third-party growth services rarely face this outcome from a single engagement event. The more realistic risk for most users is the Level 1 and Level 2 suppression states described above.

How Delivery Methodology Determines Risk Level

The detection system does not evaluate whether a user purchased engagement — it evaluates whether the engagement event passes its behavioral audit. This means the risk level of any growth service is determined entirely by its delivery methodology, not by the fact that it was purchased.

| Delivery Factor | High-Risk Approach | Algorithm-Safe Approach |

|---|---|---|

| Delivery speed | All volume delivered within minutes | Staggered over 24–72 hours |

| Account source | Bot accounts, no session history | Real accounts with behavioral history |

| Device diversity | Shared IP / emulator clusters | Distributed across genuine devices |

| Session behavior | No post-engagement activity | Normal session patterns maintained |

| Volume calibration | No baseline consideration | Calibrated to account’s current baseline |

The services available through SMMNut are evaluated against the algorithm-safe criteria in the table above. For a full overview of TikTok growth services and how each is structured, see the complete TikTok services overview on SMMNut.

Practical Steps to Reduce Detection Risk

Start With a Volume That Matches Your Baseline

The most common mistake is ordering a volume that has no relationship to the account’s current distribution rate. An account averaging 300 views per video does not need 10,000 views delivered to one post. The velocity delta is the detection trigger. Starting with a volume proportional to your current performance and scaling gradually is the safest approach regardless of which service type you use.

Maintain Consistent Content Activity During Delivery

The algorithm monitors account-level behavior holistically. An account that goes silent on content while receiving sudden engagement events creates a behavioral inconsistency. Posting normally during and after a delivery period keeps the account-level audit signals in the expected range.

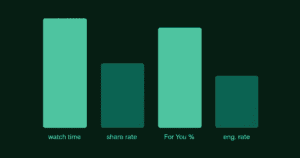

Evaluate Session Behavior After Delivery

After any growth service delivery, monitor your analytics for watch time rate and interaction rate on subsequent organic videos. If those metrics are stable or improving, the delivery passed the engagement quality audit. If watch time drops significantly, it may indicate that the engagement event created a ratio signal that is affecting distribution. For more on interpreting these metrics correctly, see how to read TikTok analytics and fix what the algorithm is penalising.

What “Safe” Actually Means in the Context of TikTok Growth Services

Safety in growth services is not a binary state — it is a risk profile determined by delivery quality, volume calibration, and account context. No service provider can eliminate all theoretical risk because the detection system continuously evolves. What responsible providers can do is apply a delivery methodology that minimizes the behavioral signals the system flags.

The clearest indicator of a service’s actual safety is its delivery architecture: does it use real accounts, does it stagger delivery over time, and does it calibrate volume to the account’s current baseline? If the answer to all three is yes, the risk profile is substantially lower than the industry default.

For a complete guide to evaluating follower services specifically within this framework, see whether buying TikTok followers hurts algorithm reach.